That’s a nicely concise description, thanks!

And, as said, I agree with both of you. The finer point I want to make is that you shouldn’t be required to be a software developer in order in express/expand thinking and action via a computational medium in a similar way that you shouldn’t be required to be an engine pilot/mechanic/engineer in order to experience convivial mobility via bike riding.

This takes me to your second point:

That I would like to interpret from Wolfgang Jonas’s theory of design as the connection of autopoietic systems

Autopoiesis in biological systems can be viewed as a network of constraints that work to maintain themselves. This concept has been called organizational closure[6] or constraint closure[7] and is closely related to the study of autocatalytic chemical networks where constraints are reactions required to sustain life.

with allopoietic ones:

An autopoietic system is to be contrasted with an allopoietic system, such as a car factory, which uses raw materials (components) to generate a car (an organized structure) which is something other than itself (the factory). However, if the system is extended from the factory to include components in the factory’s “environment”, such as supply chains, plant / equipment, workers, dealerships, customers, contracts, competitors, cars, spare parts, and so on, then as a total viable system it could be considered to be autopoietic

Here the definition of systems’ frontier is key, as autopoietic systems have a membrane that connects them with the exterior and allow them to exchange material and energy in order to reproduce themselves. This includes social systems[1].

And our local communities are in contact with external communities including developer ones, without being themselves developer communities. Here the question is how computational thinking and metatool (de)construction is preserved/extended inside the community in connection with the broader external world, so we can be as autonomous as we need/want. For that, we have been keeping long and short workshops since almost over a decade. We call them Data Weeks and Data Rodas [2] and there we do proactive documentation and living memory, like the one in the Grafoscopedia or the Documentathon. So, I would add to @Apostolis comment regarding education of new generations of members in the community, an explicit invitation to think that diverse social practices need to be put in the center, in contrast with a discourse that has been mostly dominated by digital artifacts when we discuss malleable systems, so the social part of such systems is mostly kept invisible.

Thinking in convivial computing invite us to think beyond specialized casts and the constant deconstruction of specialization. My approach for that, has been to make self-referentiality of techno-social systems more explicit: the community that defines itself via its own rules can incorporate self defining digital metatools, that contain a continuum to pass from usage to modification, without being software developers, while keeping, via social practices, the living knowledge about the tools and the communities around them, to bootstrap the reciprocal modification of communities and their tools.

Here I will not enter to valid criticisms to autopoiesis, as I think the concept still holds regarding the argument I present here. ↩︎

Data rodas was a name coined also in contrast with the already taken “coding dojo” which emphasize katas and form provided by classical algorithms (do the 99 bottles, find prime factors) and instead focus in embodied data about the world, who make/publish it, who benefits from it, and a more festive practice to approach such questions, including food, beers, coffee and dancing, when we meet to learn from each other, including non-programmers. ↩︎

Speaking of copying and reproduction of knowledge..

Can we measure memes?

From Frontiers in Evolutionary Neuroscience, Volume 3 (2011) https://doi.org/10.3389/fnevo.2011.00001

This paper briefly reviews the biologically based work on the evolution of language. The paper concludes cognitive neuroscience can, and should, apply considerable weight to the investigation of memetics. Firstly, the paper suggests that although memes may not be quantifiable, the traces of the neural processes involved in memetic replication and storage within the central nervous system (CNS) are measurable. Producing artificial memes for study is easily achieved and has already been done within experimental protocols in a number of fields.

It has been suggested that the sudden explosion of what is considered fundamentally human: consciousness, culture, language, and intellect is a consequence of our evolved capacity to imitate (Donald, 1993). That the driving force for this cultural explosion was the generation of a second environ-mental space in which memes drove biological selection as well as genes (Blackmore, 1999). A meme is a replicator, a cultural unit operating under Darwinian evolutionary principles analogous to a gene, but a distinct replicator in its own right.

Sometime between the appearance of the first stone tools created by Homo habilis (2.5 million years ago) and the arrival of Homo erectus (1.5 million years ago) our ancestors developed a considerably larger brain and more advanced mimetic culture (Donald, 1993). It is claimed that imitation conferred considerable advantage to imitators as they rapidly learned skills (fire making, hunting tactics) from others, circumventing less efficient trial and error approaches.

Blackmore argues that when humans acquired a key ability for imitation, selective pressure occurred for those who could imitate best and thus most quickly acquire skills. Demonstrations of imitative ability would be in adornment, dance, song, and music, as well as in performance via hunting and gathering abilities.

She argues that imitating gestures for communication would progress to more effective vocalizations, and that this would have led to language. Suddenly a new environment for replication emerged within the cognitive space of human brain activity and the physical world within which humans were able to interact.

Memes are replicants with the three pre-requisite properties for producing an evolutionary system, replication, variance, and selection. Memes replicate within the environment of human behavior (now technologically assisted) using human imitative behavior as their method for replication.

To replicate, memes must pass through four key stages, assimilation (multimodal perception by an individual), retention (within memory), expression (by some motoric act, speech, or gesture, which can be perceived by others), and transmission (to another individual).

The memetic unit has previously been usefully defined as a production rule with the form “if condition, then action” with actions leading to another condition or category. Sets of mutually reinforcing linked production rules (memes) could be combined to produce inferences and this set of rules would constitute a memeplex.

I haven’t quite digested this, particularly in relation to this discussion thread, but when we talk about a community’s technological practices (such as software systems and tools) and its capacity to educate new members by transmission of knowledge.. It happens in the evolutionary context of mimesis and memetics, how language and culture are passed and copied from individual to another, from group to group, and one generation to the next.

It adds another angle to the concept of “seizing the means of production”, in this case knowledge production and cultural transmission. And perhaps more justification for open-source software and publicly funded collaborative projects.

That’s clearly the weak point of our forum. We are more a community aiming to free technology from obstacles to malleability, rather than a non-tech community looking for malleable digital media. Maybe we should rename ourselves to “Anti-non-malleable systems” ![]()

That’s what I have been trying to advocate for in computational science, for about 30 years now, without much success. My colleagues like my ideas in the abstract, but consider them unrealistic dreams, either not realizable at all or too costly in terms of loss of efficiency. Scientific research being more and more subservient to capitalism means that most of us are forced to consider efficiency as their highest priority. In recent years, efficiency even became more important than reliability.

Moreover, the membranes in our communities are not well-defined. Computational science is a subcommunity within general science. Scientific software development is done both by computational scientists and by software engineers with some scientific background, often in collaboration. You can draw a membrane around a larger system that’s autopoietic, but it’s also so large that specialization inside the membrane effectively removes conviviality for most scientists. Which is something that many of my colleagues accept as inevitable.

Maybe a criterion for an autopoietic system being too large for conviviality is the absence of overarching governance.

heh, heh. Your words make me think in a reminder I have heard from the grassroots communities I work with: “we should be defined not only by we fight against, but also because of we fight for and celebrate”. The advocacy for malleable systems should bridge the gap between technical and non-technical communities and inhabit the so called third culture, that is between classically two opposite ones: the positivist empirical analytical on one side, and the social sciences, arts and humanities on the other. Focusing only in the first side will kept us within a discourse on malleable systems that doesn’t account too much in the social part of such systems, leaning heavily towards a techno solutionist/reductionist view of socio-technical malleable systems and their imbrication(s).

I had a similar experience regarding the acceptance of my ideas and suggested practices among my fellow hackerspace co-founders since 2010: they had “naturalized” the way digital technology worked for them (in fact, they were pretty privileged with the division of labor between developers and users and paid the price of becoming pretty good developers). In their favor I must say that my language back then referring to “auto-poietic technocultural bootstrapping” was pretty confusing, when I was trying to understanding the problem I was dealing with and its language, concepts, materialities and practices. And some favorable encounters were still years ahead: my re-encounter with Pharo to prototype my ideas and make them more understandable didn’t happened until 2014 and thank to it, later I would find terms like moldable development, malleable systems and during that time and later my use of “metatools” as a clearer shorter way to talk with technical and non-technical audiences about the problem of reciprocal modification between communities of practice and their digital artifacts.

What I want to point while remembering this path is than, as ideas, terminology, prototypes and the question itself became clearer, my tech educated friends found the proposal interesting, but too alien, as they were used to the Unix way with “everything is a file” mantra, editing piles of files and version controlling them in Git, as the accepted status quo made them efficient under the capitalistic dynamics they were already involved. In contrast, my humanities, social sciences and art friends were pretty much more receptive of the ideas practices and artifacts, as they didn’t know what was the “natural way” to (de)construct technology and shared some frustration with the status quo and the dis-empowerment it brings to common people in their day to day computing experiences. Non tech friends were more critical and willing to make a more complex reading of the problem and to engage in a long lasting practice, while they lacked the technical expertise to provide of “momentum” to the digital artifacts co-creation.

Of course, there are exceptions, with “tech people” willing to engage deeply in non techno-centric views of complex problems and “non-tech people” getting deep into technical expertise and materialities. It is with such people that this third culture, referred above, can be cultivated and expanded, to address sociotechnical malleable systems in the more comprehensive holistic way that such enterprise deserves.

Yes!

Do you have examples of developing that third culture? That’s pretty much what I am trying to do now. I have been in contact with the humanities for a while (mainly history and philosophy of science), but integrating both cultures still looks like a vague dream to me.

Why didn’t you use Show Desktop, though?

I have written a treatise on navigation; it applies perhaps: HTML (IPv6 no longer required)

I think this theory can simplify the scratchpad argument. A scratchpad needs to be always available. Which means automatic space allocation: an empty pad should be created when you issue a certain command. I think finding a previous scratchpad should be a separate procedure.

Over here it looks like short and long workshops, called Data Weeks and Data Rodas, where we developed a recurrent practice (since 2015) intended to enhance common knowledge, shared and forming this third culture and open to different kind of participants.

Our particular practice emphasizes agile documentation, using HedgeDoc, TiddlyWiki, mdBook, Grafoscopio and other tools and building custom workflows between such tools. And I imagine that at some point we, in this community could build some kind of “special edition” of a hypermedia publication, showcasing the diversity of conversations and concerns about malleable systems, and inviting others (specially from social sciences, arts and humanities) to enhance such conversation.

That’s a nice goal to have in mind!

I hope eventually to interact with a community that provides guidance to what type of technology it wants.

The reason I haven’t till now, is that I believe that it is necessary to solve the problem of compositionality of communities, the construction of greater social structures that could eventually replace what we call capitalism. And this is an unsolved problem in computer science, it is equivalent to having a language that describes distributed computing. (We need to have an abstraction of a group of actors, that act asynchronously, in other words , a community.)

While I consider thinking in post-capitalistic dynamics is important, in my experience, the eventual replacement of capitalism (via compositionality of communities and/or other means) has not being a prerequisite to work and co-design technologies with communities, because while those communities live under the evident influence of capitalist dynamics they are not thought/experienced under a capitalist logic, despite of the its extractivist/gentrifying forces that try to rule everything. This very forum is a place where non capitalistic incentives rules different forms of value production and exchange and we have a lot of examples in different communities around the world, including multinational ones like the ones of Libre/Open Source, the local hackerspaces, and other like a edible mushrooms collective and solidarity savings, I’m part of.

As said in other threads, those communities that exemplify other possibilities beyond capitalism, usually share their knowledge mostly in oral traditions than you experience while you’re close to them and far from academic circles or citation metrics. However, the beautiful book The Mushroom at the End of the World: On the Possibility of Life in Capitalist Ruins (Anna Tsing, 2015), showcases a non monolitic and pretty embodied critique to capitalism, that is already here, in the ruins that such system creates and sustains.

Wow, this has become quite the mega-thread. I’m super super interested in the web as a substrait for objects that work across systems, so that’s my bias coming in here. Apologies for kind of blowing through 130 existing comments (which I was reading then skimmed!) but, to return to the original post, I retain a strong interest in what NeXT actually was. There’s some material posted in the thread, that talks to a lot. But I feel like, in general, almost all NeXT coverage is super vague and super unclear about what technically was going on, and how systems worked.

There’s these hints that NeXT has objects, objects everywhere. That they exist in some persisted state. That they network. That, for one rare time in computing perhaps it wasn’t the app that was the thing, but that the entities had their own lives, and existed across apps. That inversion, de-focusing the tools, and emphasizing the entities, allowing unbounded experiences around the things in the machine & reifying the things, calls to me strongly.

And I just have no idea how real it was. Very very little of the material about there about NeXT really goes in depth, on what was really going on. What was software development like on NeXT? How was it different? What was it like building a system where objects were networked? Was object persistence automatic for all objects, or did you need to do work to use that? I really feel like the most interesting part of NeXT is totally completely ignored, is not at all taken seriously in any way, and there’s so few leads to get in and find out. I want a Speaking for the Dead on this. I want to know further, how truthfully NeXT really got to it’s aspirations to liberate the object from the tyrant the application, how networked it made it’s computing space.

I’ve whinged like this a couple times. A recent one: I hear this a lot, but I don't know why that's exciting. I remember using Window... | Hacker News

I also participate in such communities. The new society is already here in these communities.

But these communities are quite fragile to external forces or are unable to expand to other areas or be entirely autonomous.

There is no prerequisite to join those communities. But their proliferation demands some technological advancements.

Consider the case of the printing press. Before its invention, the transfer of knowledge was done by monks that wrote new books by hand. In my opinion, we are in a similar stage, where the cost of custom software (or compositionality) for a community is prohibitively high. That is the reason why we need to find ways to make is easier.

Thus some of us will be more attached to a specific community, receiving valuable feedback, while others will be more detached, trying to solve the general problems that all communities have.

Let me also point a secondary contradiction. We all want the user to be the developer. Thus we are trying to create frameworks / languages that empower the user. But this same process creates a new distinction between the people that build those malleable substrates and those that use them.

I am not sure how it relates to the above discussion, maybe not. My point though is that there will always be that distinction, between the developer and the user. That is why we need to think in terms of autonomous communities that are self-referential, meaning that they reproduce their own developers.

There’s no contradiction for me. Like all technology, computing technology is layered. I can be a high-agency user-developer of one layer and yet rely on others to maintain the infrastructure that supports that layer. Otherwise I’d have to start learning about chip design.

Recommended reading: The toaster project : or A heroic attempt to build a simple electric appliance from scratch : Thwaites, Thomas, 1980- : Free Download, Borrow, and Streaming : Internet Archive

Any attempt to free users at one level by creating a framework, will create a method of control at the higher level where the framework is developed.

That is why I emphasize the need to self control / autonomy / self-organization as opposed to complete freedom.

Indeed, but I expect this to be tricky in a complex layered tech stack. If you want to extend autonomy to chip design and manufacture, the autonomous community must be so large that it can already remove control from its sub-communities. So it takes some form of multi-level governance which as far as I know hasn’t been figured out yet.

It makes me think of boostrappable builds and self-hosted technology stacks, as a software metaphor for this kind of social organization. Reducing or eliminating any external dependencies, so the organism relies only on its own elements for creating new copies and next generations. “A system capable of producing and maintaining itself by creating its own parts.”

How this process of autopoiesis, self-creation, applies to software and computing.. It leads to a question about the social context of technology, how its goals, innovations and development are driven by politics and power structure in an industrialized capitalist society. What’s hopeful and surprising for me is that throughout the history of personal computers, the world wide web, open source and free software movement - a major force driving it forward is a communal collaborative philosophy and attitude of camaraderie, “the quality of familiarity and sociability”.

The computer and the web grew out of a cultural milieu of scientists, mathematicians, academics, professionals and experts; and equally, or perhaps more so, from participation and contribution of people outside of academia and business: users, amateurs, enthusiasts, hobbyists; self-taught experts, beginners, noobs, pseudoscientists and misguided kooks. That quality of weirdness, living outside the box, was part of the charm of the early web subculture, whose influence can be recognized even in the walled gardens of mainstream social media. There’s a thread that ties to outsider art, weird fiction, and of course, science fiction and fantasy.

Wyrd is a noun formed from the Old English verb weorþan, meaning ‘to come to pass, to become’. Adjectival use of wyrd developed in the 15th century, in the sense ‘having the power to control destiny’, originally in the name of the Weird Sisters, i.e. the classical Fates, who in the Elizabethan period became detached from their classical background and given an English personification as fays.

The Proto-Indo-European root is wert- meaning ‘to twist’, which is related to Latin vertere ‘turning, rotating’, and in Proto-Germanic is werþan- with a meaning ‘to come to pass, to become, to be due’. The same root is also found in weorþ, with the notion of ‘origin’ or ‘worth’ both in the sense of ‘connotation, price, value’ and ‘affiliation, identity, esteem, honour and dignity’.

Wyrd has cognates in Old Saxon wurd, Old High German wurt, Old Norse urðr, Dutch worden (to become) and German werden.

The weird taps into an ancient underground stream of history and culture, archaic layers of the psyche of humanity. It’s magical thinking, dream logic, theater and make-belief of art, the spell of “what if”. It’s natural that technological inventions are often prefigured by works of fiction and mythologies - horseless carriages, flying machines, intelligent robots and a world-sized brain - because science and technology are not only a product of logic, but a process of imagination.

reproduce their own developers .. communities that are self-referential

Who develops the developers, to plant and cultivate community members who help it grow, and go on to start new communities.

Surveying the landscape of software ecosystems, my impression is that the biggest ones form around languages, frameworks, open-source projects, proprietary products, niche interests. Would be curious to see actual data and examples of successful communal projects. I imagine long-lived groups have a cycle of teaching new members, some of whom become experts and teachers themselves. It’s a lossy transfer of knowledge and experience - each generation adds, changes, and forgets important things from the collective memory.

What would a self-hosted bootstrappable community look like?

Bootstrapping the technology stack is part of that larger question, so I see. Food, water, shelter. Fire, electricity, communication, computing. It’s about the bootstrappability of the individual, community, society, politics and the entire civilization stack.

Permacomputing is both a concept and a community of practice oriented around issues of resilience and regenerativity in computer and network technology inspired by permaculture.

Permacomputing encourages the maximization of hardware lifespan, minimization of energy usage and focuses on the use of already available computational resources. It values maintenance and refactoring of systems to keep them efficient, instead of planned obsolescence, permacomputing practices planned longevity.

“The lady doth protest too much,” methinks. Sounds like the powers that be are actually afraid of gods, ghosts and goblins, pagans and wildlings, witches and wizards, pirates, gypsies and natives. A re-enchantment of the world means we break free from the evil spell of the existing system, which has ruled over it for generations. Thankfully its illusions appear to be falling apart of its own inherent self-destructivity. From its ashes will rise another Renaissance, with philosopher kings and faery queens of our intergalactic future.

Rewilding and community resilience. For a community to be self-sufficient and sustainable, it needs to (re)think all aspects of its existence, growth, maintenance, life cycles, internal and external dependencies, relationship with other communities and the outside world. (Or does it? Successful organisms are often seemingly simple, yet well-adapted to the environment - some have lived for millions of years with no thinking involved at all, just eating everything it can and multiplying.)

Practically it’s impossible to have zero dependency, to achieve complete freedom and independence. There’s a necessary surface, like a cell with a membrane, an interface and exchange between self and other, between a community and non-community, non-members, larger social structures, laws and states. It relates to individual and collective identity, how it maintains itself as an idea and in practice.

To imagine what post-collapse community and computing can look like, and what kind of self-hosted infrastructure we can prepare for our beloved future-primitive earthlings. Part of the effort is to cultivate the culture and value system, as a fertile ground for ideas, practical tools, know-how, building blocks.

To rethink everything from first principles, down to the fundamentals. Essentially going through the exercise of rebuilding civilization from scratch ourselves, in order to understand what is involved in the modern life stack, to untangle the dependencies and vendor the most useful libraries, to make it all ours. Like a Stage X for community as software, “a minimal, fully bootstrapped, deterministic, multi-party-signed distribution for verifiable infrastructure.”

Rewilding is a funny word. A re-naturing of civilization, humanity’s return to nature like a wayward son comes home. The tragedy is that we never left, the Garden of Eden is where we’ve always lived - a heaven on earth and what have we done with it. Be wild, weird child, and dream of a better world.

The issue I always come back to is “what is a community?” I see communities as layered, hierarchically structured, partially overlapping. It’s a mess!

The biosphere as a whole is an autopoetic system with minimal dependencies, basically just incoming sunlight (and if you want to dig down further, favorable laws of operation of the universe).

The human species is autopoetic as well, with dependencies on its physical and biological environment for food and shelter.

It’s the smaller scales that become complicate to analyze. Looking at just my work environment as a researcher in computational biophysics, I can identify multiple communities that I am part of: “computational science”, “biophysics”, “scientific software development”, the scientific community at large, multiple communities of Open Source software project, etc. These various communities have common but also diverging needs and interests. Some of them are moderately autopoetic, but all depend on an incoming flux of people and resources for their continued existence. Some of them also depend on other communities, as for example in software stacks.

Maybe a community isn’t like a single cell with a defined membrane, but more like a wave pattern, an emergent quality of a unified force, or at least an illusion of a single organizing will, arising from the collective behavior of independent particles.

A highly social bird, starlings associate in flocks of varying sizes throughout the year and are widely known for a distinctive, often dramatic swarming behavior known as murmuration — a simultaneously synchronized and seemingly random flock movement characterized by sudden, erratic direction changes without an observable leader. The sharp pushing, pulling, diving, pulsating and swooping of the flock in response to the individual movements may confuse and discourage predators such as falcons, providing a collective protection. The term murmuration derives from the low, indistinct sounds of a dense flock’s wings — i.e., the murmor.

Speaking of a low indistinct sound and collective behavior, a paper I found fascinating:

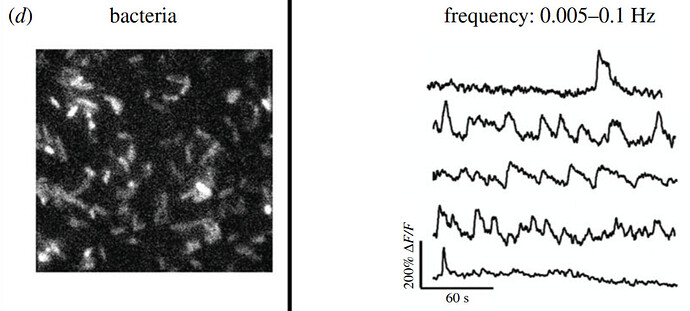

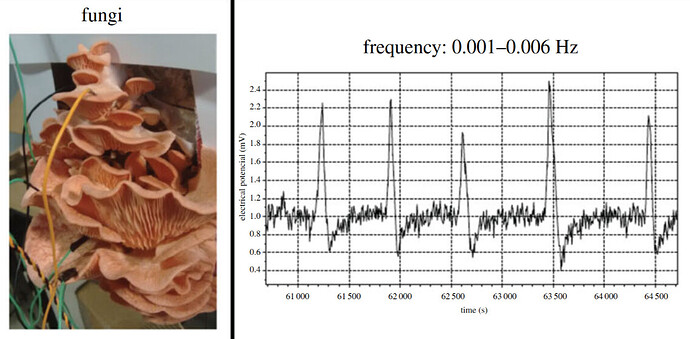

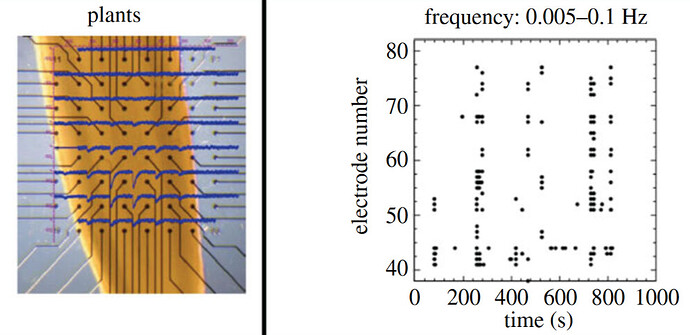

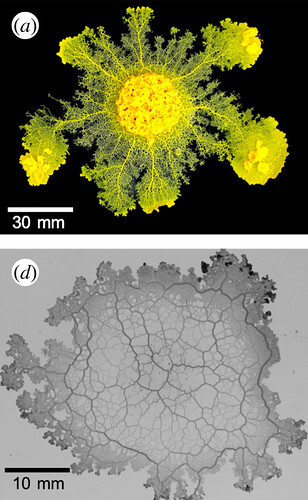

- Spontaneous electrical low-frequency oscillations: a possible role in Hydra and all living systems. From Philosophical Transactions of the Royal Society B: Biological Sciences. January 2021, Vol.376.

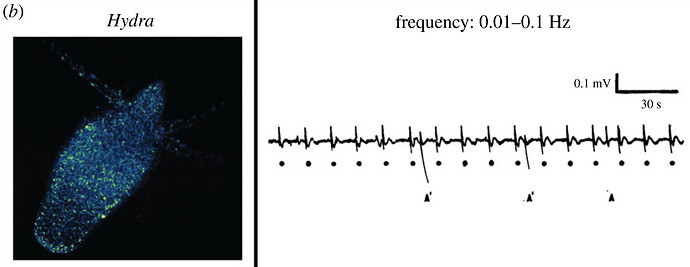

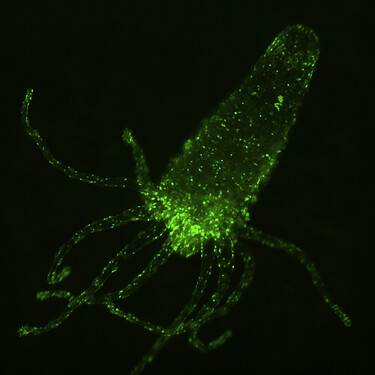

As one of the first model systems in biology, the basal metazoan Hydra has been revealing fundamental features of living systems since it was first discovered by Antonie van Leeuwenhoek in the early eighteenth century.

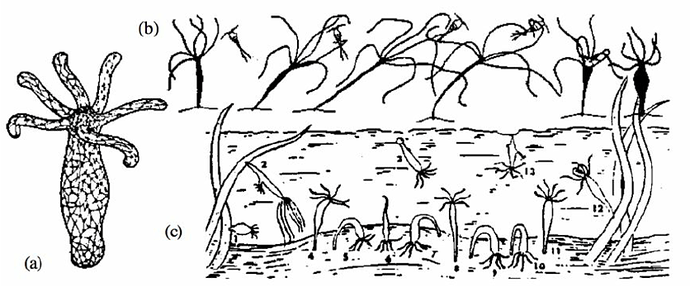

Hydra are part of the Cnidaria phylum with jellyfish, sea anemones, corals. An alien-looking micro-creature without a brain, only a simple form of undifferentiated nerve net, yet “catches prey by coordinated action of its tentacles, and has no less than 12 different forms of motion, from stages of somersaulting to snail-like gliding.”

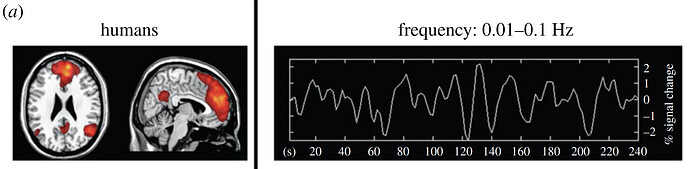

While it is well-established within cell and developmental biology, this tiny freshwater polyp is only now being re-introduced to modern neuroscience where it has already produced a curious finding: the presence of low-frequency spontaneous neural oscillations at the same frequency as those found in the default mode network (DMN) in the human brain.

This organism-wide oscillatory electrical activity of low frequency (typically 0.01–0.1 Hz, but the exact frequency is organism-dependent) is spontaneously produced independent of external stimuli and does not appear to directly generate behaviour. The DMN in the human brain has become widely studied and hypothesized to play a role in ‘resting-state’ mental processes, such as spontaneous thought, episodic memory, mind-wandering and self-related processing.

Increasing evidence suggests such spontaneous electrical low-frequency oscillations are found across the wide diversity of life on Earth, from bacteria to humans. This paper reviews the evidence for SELFOs in diverse phyla, beginning with the importance of their discovery in Hydra, and hypothesizes a potential role as electrical organism organizers, which supports a growing literature on the role of bioelectricity as a ‘template’ for developmental memory in organism regeneration.

The same cortical oscillation frequency distribution found in human brains has been found in the cortex of all mammalian brains studied thus far, including in chimpanzees, macaques, sheep, baboons, pigs, dogs, cats, rabbits, guinea pigs, rats, hamsters, gerbils, mice and bats. Using new functional magnetic resonance imaging (fMRI) techniques, SELFOs have recently been observed in human, non-human primate, and rat spinal cords, indicating these oscillations pervade the entire mammalian central nervous system.

Spontaneous neural activity is not specific to mammals, however. Zebrafish brains generate a wide range of spontaneous oscillation frequencies, including the ultraslow-frequency range (0.01–0.1 Hz). Brain-wide oscillations of a variety of frequencies have also been recorded in a wide range of insects, including moths, locusts, water beetles, honeybees and flies.

Since the discovery of the molecular head organizer in the Hydra over 100 years ago, much has been learned about how organisms build their bodies. That is, we have learned much about the spatial domain of biology—how multiple independent units (e.g. proteins in cells and cells in multicellular organisms) are coordinated in space to form a unified, structural whole.

However, much less is known about the temporal domain of biology. Once a structural whole, a body, is built, how is it maintained and how is its activity coordinated in time? How does such a body constructed of many parts move and behave as one, coherent unit?

Those sure sound like the kind of questions that every community faces.

Maybe self-sufficiency and independence, or bootstrappability from scratch, are not as important or practical than seeking/providing mutual support, interdependence, and symbiosis.

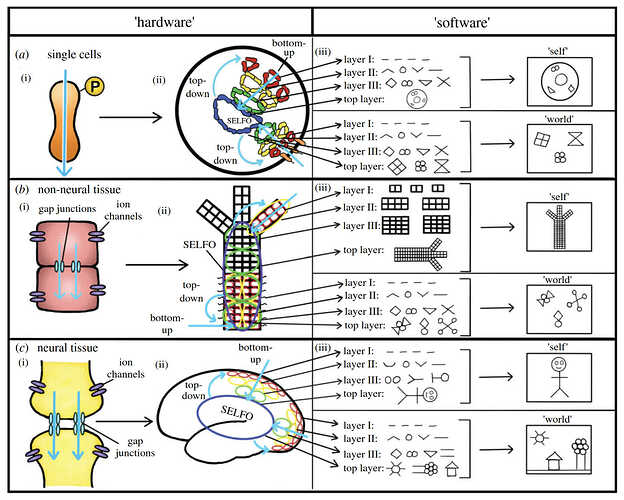

Electrical signalling throughout life

(a) Electrical signalling in single cells in which the ‘hardware’ may consist of (i) proteins that pass current (blue arrow) depending on their configuration, which can be altered with protein modifications (such as phosphorylation as shown). These ‘hardware’ pieces can be arranged in different networks within cells (ii) to form electrical circuits encoding information about the internal (top right circuit) and external (bottom right circuit) states, which can feed up to the spontaneous electrical low-frequency oscillator (SELFO) at the top level to be integrated to produce abstract representations (i.e. ‘software’) (iii) of both the cell’s internal state (i.e. ‘self’ model) and external environment (i.e. ‘world’ model). The top-level SELFO can then feed back down to coordinate and update the lower-level components.

(b) The same general architecture applies at the next level of scale in non-neural tissue where the ‘hardware’ becomes (i) non-neural cells that can be connected via gap junctions to allow the passage of ions intercellularly (blue arrows) while ion channels function to conduct ions intra- or extracellularly..

(c) In organisms with nervous systems, the ‘hardware’ is upgraded to neurons.. These ‘hardware’ pieces can be arranged in different configurations within neural tissue to form faster and more complex electrical circuits encoding information about the internal (top right circuit) and external (bottom right circuit) states.

It’s describing a flow of information from the “bottom up”, lowest primitive elements to the whole collective organism; and a reciprocal “top down” flow of feedback and control. It’s also talking about “hardware”, the mechanism or medium by which information is encoded; and “software”, the information itself, layers of abstract representations from simple to more complex ideas, forming a model or image of the self and the world.

All biological systems are composed of many constantly changing parts that must continually cooperate to form a unified whole that can both maintain its structure (i.e. its body) and move it to generate coherent, adaptive behaviour. This implies some part of the system must have access, however indirectly, to all the information within the system. ..SELFO (spontaneous electrical low-frequency oscillations) may thus continuously receive bottom-up electrical information from the entire system which it then might integrate over a specific time window based on its frequency, before taking a ‘snapshot’ of the organism and its environment.

Having considered how SELFOs may emerge bottom-up via a variety of mechanisms within biological organisms, I propose three potential functions of SELFOs within living systems:

It seems like these aspects of an organism’s whole/part, inner/outer communication network can apply to how a community keeps organizing itself over time.

(i) maintaining [the living system] at or near their critical point

(ii) integrating all the lower-level electrical information in the system

(iii) continually communicating that high-level ‘view’ back down .. to coordinate and update them on the overall state .. to generate coherent, adaptive behaviour

The parts communicate with the whole, informing of the state of the world and self. The whole “directs the will” to move the entire organism (or group of organisms) as one.

In recent readings I keep encountering the idea of criticality from different angles. Self-organized criticality, critical point/state/phenomena. Related to non-equilibrium systems and non-linear dynamics, phase transitions and systems change.

What is the field of study about modelling collective behavior of living systems..

Cybernetics is the transdisciplinary study of circular causal processes such as feedback and recursion, where the effects of a system’s actions (its outputs) return as inputs to that system, influencing subsequent action. It is concerned with general principles that are relevant across multiple contexts, including in engineering, ecological, economic, biological, cognitive and social systems and also in practical activities such as designing, learning, and managing.

Systems thinking?

A system is a set of things .. interconnected in such a way that they produce their own pattern of behavior over time.

Systems thinking is a way of making sense of the complexity of the world by looking at it in terms of wholes and relationships rather than by splitting it down into its parts. It has been used as a way of exploring and developing effective action in complex contexts, enabling systems change.

How do we change the structure of systems to produce more of what we want and less of that which is undesirable? MIT’s Jay Forrester likes to say that the average manager can guess with great accuracy where to look for leverage points - places in the system where a small change could lead to a large shift in behavior.

– Thinking In Systems: A Primer (2008) - Donella Meadows

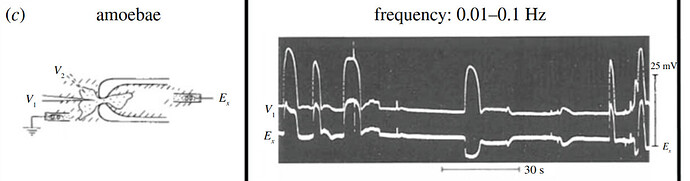

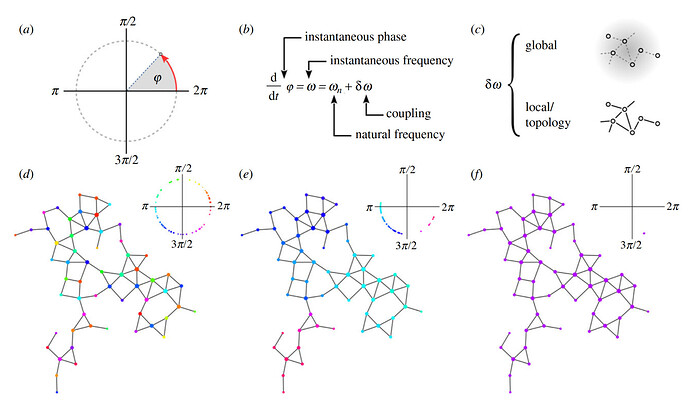

Adaptive behaviour and learning in slime moulds: the role of oscillations

The slime mould Physarum polycephalum, an aneural organism, uses information from previous experiences to adjust its behaviour, but the mechanisms by which this is accomplished remain unknown. This article examines the possible role of oscillations in learning and memory in slime moulds.

Slime moulds share surprising similarities with the network of synaptic connections in animal brains. First, their topology derives from a network of interconnected, vein-like tubes in which signalling molecules are transported. Second, network motility, which generates slime mould behaviour, is driven by distinct oscillations that organize into spatio-temporal wave patterns. Likewise, neural activity in the brain is organized in a variety of oscillations characterized by different frequencies.

Interestingly, the oscillating networks of slime moulds are not precursors of nervous systems but, rather, an alternative architecture. Here, we argue that comparable information-processing operations can be realized on different architectures sharing similar oscillatory properties.

After describing learning abilities and oscillatory activities of P. polycephalum, we explore the relation between network oscillations and learning, and evaluate the organism’s global architecture with respect to information-processing potential. We hypothesize that, as in the brain, modulation of spontaneous oscillations may sustain learning in the slime mould.

The American scientist Norbert Wiener characterised cybernetics as concerned with “control and communication in the animal and the machine”.

Another early definition is that of the Macy cybernetics conferences, where cybernetics was understood as the study of “circular causal and feedback mechanisms in biological and social systems”. Margaret Mead emphasised the role of cybernetics as “a form of cross-disciplinary thought which made it possible for members of many disciplines to communicate with each other easily in a language which all could understand”.

Other definitions include: “the art of governing or the science of government” (André-Marie Ampère); “the art of steersmanship” (Ross Ashby); “the study of systems of any nature which are capable of receiving, storing, and processing information so as to use it for control” (Andrey Kolmogorov); and “a branch of mathematics dealing with problems of control, recursiveness, and information, focuses on forms and the patterns that connect” (Gregory Bateson).

Our old friend Kolmogorov, Привет. Bateson’s Steps to an Ecology of Mind, a book I read a few lifetimes ago. Norbert Wiener, I remember one of his books has a dystopian title, “The Human Use of Human Beings”.

Wiener is one of the key originators of cybernetics, the science of communication as it relates to living things and machines, a formalization of the notion of feedback, with implications for engineering, systems control, computer science, biology, neuroscience, philosophy, and the organization of society.

The field has roots in the works of Leibniz, Babbage, Maxwell, and Gibbs.

Wiener’s work with cybernetics influenced computer pioneer John von Neumann, information theorist Claude Shannon, anthropologists Margaret Mead and Gregory Bateson, and through them, anthropology, sociology, and education.

How deep it goes, each branch of inquiry leads to more branches, a fractal of intellectual history and collaborative thinking over generations.

Wiener is quoted as saying, “The general idea of a computing machine is nothing but a mechanization of Leibniz’s Calculus Ratiocinator.” He considered Leibniz the “patron saint of cybernetics”. The calculus ratiocinator anticipated aspects of the universal Turing machine.

Leibniz is an all-time favorite, 17th-century German polymath philosopher who developed binary arithmetic from studying hexagrams and cosmological ideas found in the 9th-century Chinese divination manual, the I Ching. Off the path, I’m drawn to the weird brilliant philosophers and dreamers of that era of Europe, like Athanasius Kircher, Rudolf the Second and his crew, Elizabeth the First’s court alchemist John Dee.

John Dee (1527 – 1608) was an English mathematician, astronomer, teacher, astrologer, occultist, and alchemist. He was the court astronomer for, and advisor to, Elizabeth I. As an antiquarian, he had one of the largest libraries in England at the time.

Dee’s activities straddle magic and modern science. He was invited to lecture on Euclidean geometry at the University of Paris while still in his early twenties. He was an ardent promoter of mathematics, a respected astronomer and a leading expert in navigation, who trained many who would conduct England’s voyages of discovery.

“Voyages of discovery” is one way to put it. As a political advisor, John Dee advocated the foundation of English colonies in the New World to form a “British Empire”, a term he is credited with coining.

Meanwhile, he immersed himself in sorcery, astrology, and Hermetic philosophy. Much effort in his last 30 years went into trying to commune with angels, so as to learn the universal language of creation and achieve a pre-apocalyptic unity of humankind.

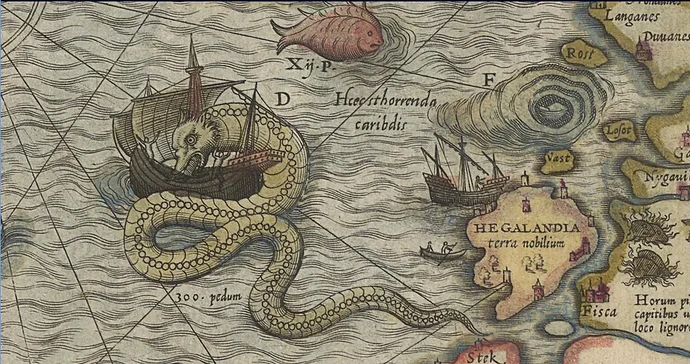

Before the disenchantment of the modern age, when it still wasn’t clear where reality ended and fantasy began - like world maps with sea monsters beyond terra incognita. Here be dragons.