I’m drawn to riff on some of the concepts in the post by a contrast of opposites, like:

- Linear logic (sequences) ↔ Non-linear logic (jumps, loops)

- Geometrical or spatial thinking (images) ↔ Linguistic thought process (words)

- Illusion of infinite time, memory, and other computational resources ↔ Working with limits, boundaries, the usefulness of constraints

- Abstraction as hiding of reality and details ↔ Revealing inner processes and material

- Names and symbols (references and representations) ↔ Values and operations (referents, the thing or process being represented)

If signs can be used to tell the truth, they can also be used to lie. – Umberto Eco

But I’m unfamiliar with catlangs, other than introductory knowledge like in this talk at Strange Loop.

Where Uxn is mentioned in the latter part as an example of a versatile “readable” assembly language. My interest in Forth, postfix notation (“reverse Polish”), and stack-based languages was renewed recently, as a kind of surprise. I’ve been pursuing an idea of a low-level Lisp dialect that compiles to WebAssembly, trying from various angles and levels of abstraction - written in C, Scheme, Python, TypeScript, then eventually raw Wasm text format.

Hand-writing assembly code is oddly comfortable after a while. There’s just a big array

of bytes as linear memory, and a handful of instructions to operate on numbers. The rest of the universe is up to you. To get fluent, I’m reading other people’s hand-written assembly programs - some are truly a kind of literature.

Like this Ichigo Lisp, a LISP 1.5 interpreter by a Japanese author. “Ichigo” いちご means strawberry,  .

.

It even includes a just-in-time compiler. Or the Ribbit Scheme virtual machine written in Wasm.

WebAssembly code looks like Lisp, a tree structure of symbolic expressions, but that’s only syntactic sugar for ease of reading and writing.

Folded Instructions - Instructions can be written as S-expressions by grouping them into folded form. In that notation, an instruction is wrapped in parentheses and optionally includes nested folded instructions to indicate its operands.

The expression (* (+ x 2) 3) in folded form:

(𝚒𝟹𝟸.𝚖𝚞𝚕

(𝚒𝟹𝟸.𝚊𝚍𝚍

(𝚕𝚘𝚌𝚊𝚕.𝚐𝚎𝚝 $𝚡)

(𝚒𝟹𝟸.𝚌𝚘𝚗𝚜𝚝 𝟸))

(𝚒𝟹𝟸.𝚌𝚘𝚗𝚜𝚝 𝟹))

It’s unfolded to a stack-based linear stream of instructions, which directly corresponds to the binary format.

𝚕𝚘𝚌𝚊𝚕.𝚐𝚎𝚝 $𝚡

𝚒𝟹𝟸.𝚌𝚘𝚗𝚜𝚝 𝟸

𝚒𝟹𝟸.𝚊𝚍𝚍

𝚒𝟹𝟸.𝚌𝚘𝚗𝚜𝚝 𝟹

𝚒𝟹𝟸.𝚖𝚞𝚕

Even in this simple example - or maybe because of its simplicity, it’s easier to see the difference - the true nature of the computation happening is revealed in its catlang form. The Lisp syntax offers an illusion for convenience, from the first step: an action (multiply) that “waits” for its arguments to be evaluated and passed; but the actual first action that happens is to push a value from x on the stack.

In script/infix form, (x + 2) * 3, the evaluation order goes like this, step by step:

( ; Start expression - Defines precedence of add operation over multiply

x ; Step #1 <-- Actual first thing evaluated

+ ; Step #3 Add..

2 ; Step #2 <-- Next thing evaluated

) ; Push result to stack

* ; Step #5 Multiply..

3 ; Step #4 <-- Evaluated and passed to multiply

In list/folded form:

(* ; Step #5 Multiply..

(+ ; Step #3 Add..

x ; Step #1 <-- Actual first thing evaluated

2) ; Step #2 <-- Next thing evaluated

3) ; Step #4

In  form:

form:

x ; Step #1 Push value to stack

2 ; Step #2 Push value to stack

add ; Step #3 Add (and push result to stack)

3 ; Step #4 Push value to stack

mul ; Step #5 Multiply

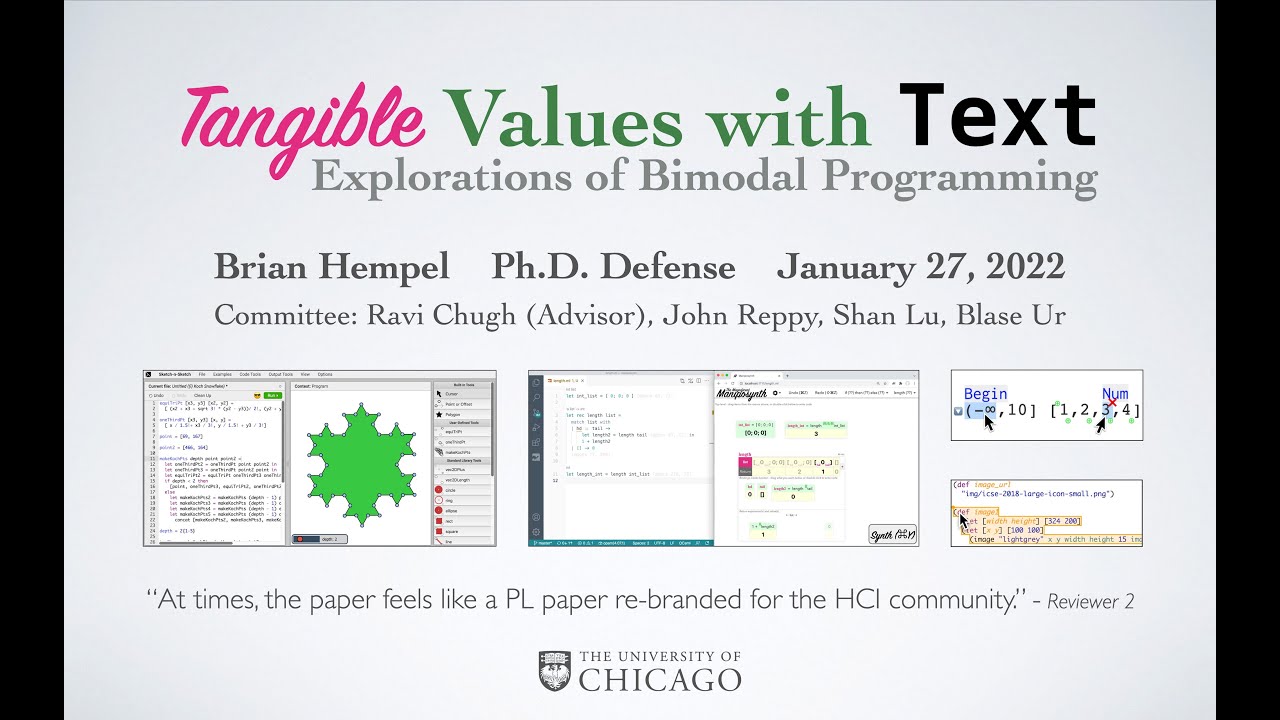

Today, before I started this comment, I’d been listening to this talk:

And I happened to pause it on a slide that says:

Embrace non-linear editing

Pure functional programming (programming without state) complements non-linear editing because, without state, one need not manage time.

I wonder how it relates, but in the comparison I made above of how an expression in script/lisp/catlang syntax is evaluated in order, the stack-based concatenative notation seems to map most directly to the actual computation happening; with the most straight-forward sequential logic in a linear stream of instructions; with the least number of “things to keep in your head” while evaluating the steps.

WAForth: Forth Interpreter and Compiler for WebAssembly.

This is another example of hand-written WebAssembly of non-trivial scope.

There’s a lot to love about the project, including Thurtle for turtle graphics.

Looking at this code though, maybe something like LOGO is more intuitive for teaching programming. The first line:

450 CONSTANT SIZE

For anyone unfamiliar with how it works, it probably feels too sudden to start with the value 450. The “size” is the main character of the line, and 450 is only a specific point in the range of values. If it were written in natural language, it might flow better as:

const size = 450

- The constant (adjective)

- “size” (noun)

- is (verb)

- 450 (quantity).

But then again, this subjective naturalness of grammar may depend on a person’s mother tongue or primary language. In anthropology I’ve heard of languages with no nouns, where all forms are temporary states in the eternal flux: there is no table, it is only table-ing. That describes the situation more accurately than nouns and objects with an implicit assumption, for what is a “constant” but an illusion of infinite time.

Maybe in some human languages the speaker is expected to “get to the point” and say the specific value first. 450 is the size of the plant.7 branches. 160 degrees spread.

About illusion of infinite memory, what comes to mind is garbage collection and how it frees programs from worrying about managing memory - efficiently allocating, freeing, and defragmenting space. Somewhat related educational articles by the same author:

That Scheme to Wasm compiler is getting closer to what I wanted, but it’s based on WasmGC (WebAssembly garbage collection) feature, that’s relatively new and not part of Wasm v1, the original simpler spec I’ve decided to use as a stable pillar, similar in role to C99, for its ease of implementation and wide availability/compatibility with runtimes. In any case this particular Scheme is still too high-level and dynamic, I want a language that can be slightly above assembly, so it needs static typing - in Wasm v1 there are only 4 types of values (integers i32/i64 and floats f32/f64) - and manual memory management, no garbage collection, with possible benefit for use cases like audio processing.

Then I found Pre-Scheme, “a statically-typed dialect of Scheme with the efficiency and low-level machine access of C”. Very interesting, but doesn’t quite fit my use case.

Whether a language compiles to Wasm, or x86/ARM/RISC-V instruction set architectures, what’s left after removing the abstractions is a stack-based machine, for which a concatenative language is a direct mapping and representation of how it works. But I confess my true longing, and what’s emerging from my explorations, is a Scheme-like abstract language to bridge between languages and data formats, from low-level assembly code to higher abstractions like names, lists, strings - to describe the spectrum of data and code, documents and programs.

What I’m learning is that being able to deterministically “fold” into a Lisp-like tree, and “unfold” into a Forth-like linear stream of instructions, is useful not just in terms of data structure and algorithm, but as part of language design and syntax, conversion/translation, interpreting and compiling programs.

Another area where this is relevant for me, is that I’ve been exploring tree-sitter, a parser generator library that supports dozens of languages in a uniform interface. Each grammar is compiled to a C program (therefore can be built as Wasm binary) that parses source code of the given language to a concrete syntax tree.

It can incrementally parse syntactically incomplete/invalid programs, which is useful for integrating with code/text editors. By design the parser emits a linear stream of events, which for most purposes is the best way to efficiently process the syntax tree part by part, creating and discarding state, instead of keeping the entire tree in memory. A similar contrast exists between immediate-mode graphics, that creates and destroys the universe on every moment (“tick” or frame); and retained-mode graphics, which builds the entire tree at the start and mutates its state over the course of the running program. It’s simpler to manage a single immutable moment every time (now), than having to keep up the illusion of a stable identity over time.

It feels like every language and data format can be parsed into a Lisp-like tree (or graph), then flattened and unfolded into a linear cat-lang form as needed. Maybe these ways of structuring an expression - grammar or dialect - are computationally equivalent, as a sentence spoken in different languages can have the same meaning - or similar enough for practical purpose, where the conversion to and from syntaxes are lossless in terms of information.

Let me illustrate this with a recent example, involving my work on “metabiology” that models biological evolution by studying the evolution of mutating software. I am attempting to unify theoretical biology and theoretical computer science based on the ansatz that DNA is digital software.

And having set out on this path, I immediately discover that Stephen’s NKS is highly relevant. In particular, I think that the discussion of the Cambrian explosion in Stephen Jay Gould’s Wonderful Life is illuminated by Wolfram’s metaphor of mining the computational universe, and that the mysterious origin of life, which is actually the spontaneous discovery of software by Nature, is illuminated by Wolfram’s thesis that it is easy to build a universal Turing machine out of almost any discrete combinatorial components.

– From Irreducibility and Computational Equivalence: 10 Years After Wolfram’s A New Kind of Science, in the book series Emergence, Complexity and Computation.

Commercially available memories are available only in finite sizes (more’s the pity).. In order to make such memories useable to our processor we must interpose between EVAL and the storage system a storage manager which makes a finite vector memory appear to the evaluation mechanism to be an infinite linked-record memory.. The catch is that at no time may more records be active than will fit into the finite memory actually provided. The memory is “apparently infinite” in the sense that an indefinitely large number of new records can be “created” using the CONS operator. The storage manager recycles discarded records in order to create new ones in a manner completely invisible to the evaluator.

..A final philosophical thought: it may be worth considering kinds of “stuff” other than vectors and linked records to use for representing data. For example, in LISP we generally organize the records only into trees rather than general graphs. Other storage organizations should also be explored. The crucial idea, however, is that the instruction set should then be fit into the new storage structure in some natural and interesting way, thereby representing programs in terms of the data structures. Continuing the one example, we might look for an evaluation mechanism on general graphs rather than on trees, or on whatever other storage structure we choose.

Finally, the instruction set, besides being represented in terms of the data structures, must include means for manipulating those structures. Just as the usual computer has ADD and AND; just as the LISP architecture presented here must supply CAR, CDR, and CONS; so a graph architecture must provide graph manipulation primitives, etc. Following this paradigm we may discover yet other interesting architectures and interpretation mechanisms.

– Design of LISP-Based Processors - LAMBDA: The Ultimate Opcode

https://research.scheme.org/lambda-papers/lambda-papers-ltu-opcode.html

..A portable low-level bytecode called WebAssembly. It offers compact representation, efficient validation and compilation, and safe low to no-overhead execution. Rather than committing to a specific programming model, WebAssembly is an abstraction over modern hardware, making it language-, hardware-, and platform-independent, with use cases beyond just the Web.

..WebAssembly computation is based on a stack machine; code for a function consists of a sequence of instructions that manipulate values on an implicit operand stack, popping argument values and pushing result values. However, thanks to the type system, the layout of the operand stack can be statically determined at any point in the code, so that actual implementations can compile the data flow between instructions directly without ever materializing the operand stack. The stack organization is merely a way to achieve a compact program representation, as it has been shown to be smaller than a register machine.

..The stack layout is fixed (branches must push and pop the same number of operands), so Wasm engines can compile the stack away, producing efficient machine code operations on registers, rather than managing a stack at runtime. A stack machine architecture was chosen as it can have higher code density than a register machine.

– Bringing the web up to speed with WebAssembly

https://dl.acm.org/doi/10.1145/3062341.3062363

Well, while it was fun to write, this comment doesn’t do justice to the topic of catlangs. I wanted to describe how my philosophical love of Lisp as a universal language of computation is enriched and challenged by its encounter with assembly language and stack-based machine instructions - and, indirectly, how a concatenative paradigm like Forth seems better suited for expressing the actual concrete computational process happening at the level of the machine.

In a concatenative programming language, things are evaluated by composing several functions which all operate on a single piece of data, passed from function to function. This piece of data is usually in the form of a stack. Additionally, this function composition is indicated by concatenating programs. Examples of concatenative languages include Forth, Joy, PostScript, Cat, and Factor.

Although the terms stack language and concatenative language sometimes get thrown around interchangeably, they actually represent similar but distinct classes of languages. A concatenative language is not necessarily a stack language. For example, Om uses prefix notation, rather than postfix, and passes the remainder of the program as the data from function to function. See Deque for another example of a stack-free concatenative language.

– concatenative.org/wiki