Logic Alphabet, Visual Variables, Tangible Values. Sémiologie des Graphiques: Diagrams, Networks, Maps. On a fruitful angle of inquiry, study and research.

At sea, to communicate from one ship to another, or from a ship to a seaport.. A prisoner can, through the bars of the window, express thoughts to the outside world .. By playing tones, or if at night, by displaying bright lanterns or fires of the 7 colors.

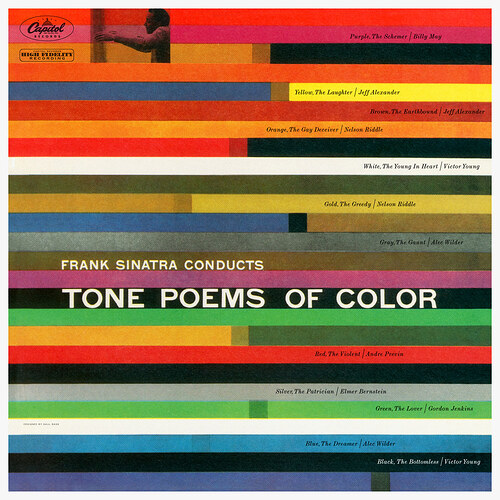

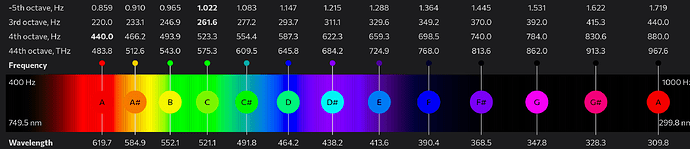

Solresol and the Shavian alphabet are beautiful, conceptual works of art. Lovely correspondence of colors, musical notes, and language. And software keyboard input, too.

Building blocks of logic - Über die Bausteine der mathematischen Logik. Programs as proofs, and proofs as programs.

A tree algebra for XML documents, JSON structures, and query patterns. Fudgets: Graphical User Interface in a Lazy Functional Language.

Communicating sequential processes (1978, Tony Hoare).

This paper suggests that input and output are basic primitives of programming and that parallel composition of communicating sequential processes is a fundamental program structuring method. – Bell Labs and CSP Threads

jq, a command-line JSON processor, I learned is a concatenative language to process and query a stream of JSON over Unix pipes - to slice, filter, map and transform structured data. jaq, a Rust variant with a new twist on the concept.

[1, 2, 3] | .[] |= .+1 ⟼ [2, 3, 4]

0 | while(. <= 3; . + 1) ⟼ 0 1 2 3

2 | limit(7; repeat(1, ., 3)) ⟼ 1 2 3 1 2 3 1

[0, 1, 2, 3] | map(.*2) | [.[] | select(. < 5)] ⟼ [0,2,4]

Streams are a first-class language primitive, even booleans and numbers are filters that return their respective values. And how casually it creates an infinite sequence with recurse and repeat.

How the data flows through a pipeline of expressions with lazily evaluated values.

Feels like a good fit for the heartbeat generator (clock, metronome) I started: the ![]() function is an infinite sequence yielding values over time. For the syntax I’d prefer an extended JSON with pipeline

function is an infinite sequence yielding values over time. For the syntax I’d prefer an extended JSON with pipeline |> operator (and syntax sugar like comments, optional trailing comma, unquoted object property names); maybe something that can easily run on, for example MicroQuickJS, a JS engine for microcontrollers.

catlang based on Solresol

Oh I like that idea!

Wittgenstein defines philosophy as a kind of homelessness. “Bringing words back to the language games that are their original homes.”

How to Make Trouble and Influence People: Pranks, Protests, Graffiti & Political Mischief-Making. Détournement and recuperation. Culture jamming, broadcast signal intrusion, psychogeography, monochrom. Care farming, biophilia hypothesis, green exercise, and guerilla gardening. Groupe μ, a collective of semioticians. Counter-television.

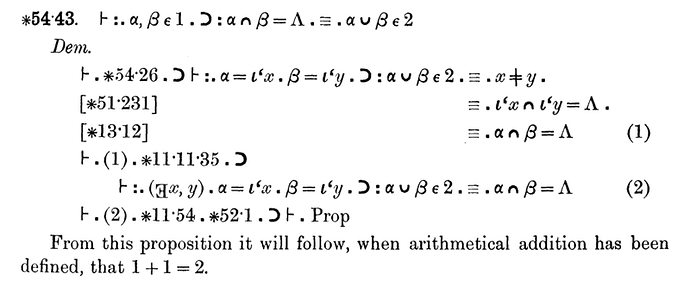

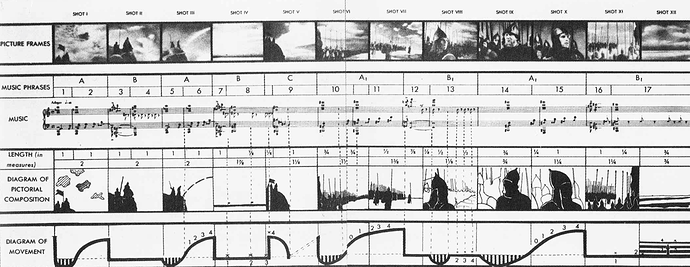

Above an example of notation used in Principia Mathematica (1910). I’m starting to see why there is not only one language, but an endless variety and permutations of graphemes, ligatures, vowels and consonants, digits, letters, symbols, gestures. Below an audio-visual sequence diagram from director Sergei Eisenstein’s film Alexander Nevsky (1938).

Graphomania (from Ancient Greek: γρᾰ́φειν, gráphein, “to write”; and μᾰνῐ́ᾱ, maníā, “madness, frenzy”) is an obsessive impulse to write.

Graphorrhea is a communication disorder involving excessive wordiness, incoherent rambling, or frequent digressions in writing. The ramblings may be grammatically correct, but still leave the reader confused and unsure what the piece is about.

Hypergraphia is a behavioral condition characterized by the intense desire to write or draw.

Aw how fun would it be to have a magazine of hypermedia literature called Hypergraphia.

Proofs as Programs.

It turns out that normalization corresponds to performing computations in a functional language. To give an example, suppose that we do not take all numbers as primitive, but instead start with just

0(zero) and the successor functionsthat adds one to zero. Thus we could represent the number3as the terms(s(s(0))). We can also represent a function for adding2toxas a termλx.s(s(x))of typeN -> N. Now consider a proofλx.s(s(x)) : N -> N s(s(s(0))) : N ---- λx.s(s(x))[s(s(s(0)))] : NLambda reduction of the final proof term,

λx.s(s(x))[s(s(s(0)))]yieldss(s(s(s(s(0))))), which is our internal representation for5, the result of adding2to3.– Linear Logic for Linguists

Repeatedly applying rewriting rules to an expression and reducing it to its simplest form, is equivalent to evaluating a program.

Laurie Spiegel’s Music Mouse. Interview - On Algorithmic Composition (1987)

- The Programming Language as a Musical Instrument

- Bricolage Programming in the Creative Arts

- Other papers from the Psychology of Programming Interest Group

- Programming Pearls: Little Languages

- Little Languages for Music - Peter Langston (PDF)

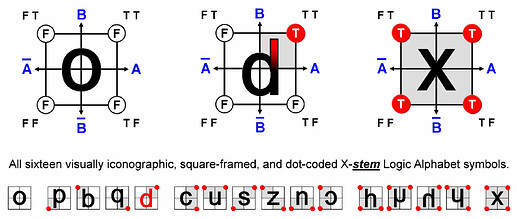

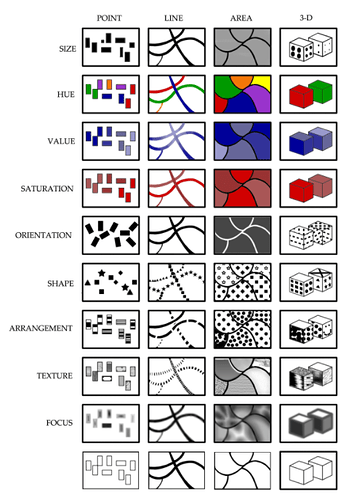

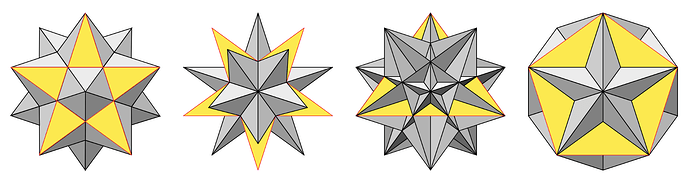

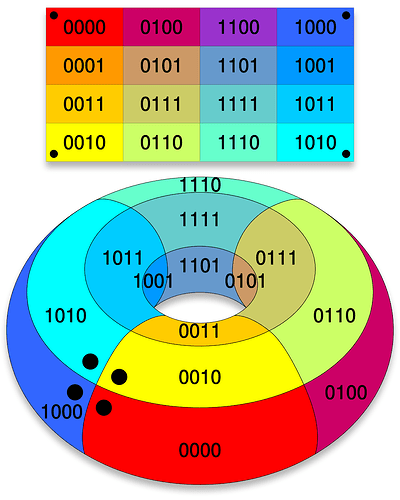

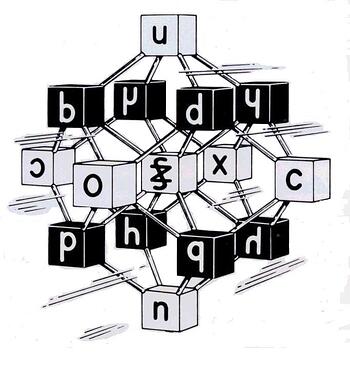

Shea Zellweger’s logic alphabet offers a visually systematic way of representing each of the sixteen binary truth functions.

The idea is to represent the truth functions in the form of a square matrix, and then to assign a letter shape to each of these matrices. When drawing a logic symbol, one passes through each square with assigned F values while stopping in a square with assigned T values.

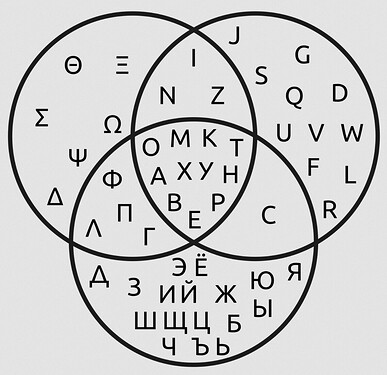

Venn diagram of Greek, Latin and Russian Cyrillic upper case graphemes.

A precursor of Boolean algebra was Gottfried Wilhelm Leibniz’s algebra of concepts. The usage of binary in relation to the I Ching was central to Leibniz’s characteristica universalis. It eventually created the foundations of algebra of concepts, which is deductively equivalent to the Boolean algebra of sets.

Ludwig Wittgenstein introduced a version of the 16-row truth table as proposition 5.101 of Tractatus Logico-Philosophicus (1921).

From 1934 to 1936, NEC engineer Akira Nakashima, Claude Shannon and Victor Shestakov introduced switching circuit theory in a series of papers showing that two-valued Boolean algebra, which they discovered independently, can describe the operation of switching circuits. Using this property of electrical switches to implement logic is the fundamental concept that underlies all electronic digital computers.

"She is not manipulating the machine by turning knobs or pressing buttons. She is writing messages to it by spelling out instructions letter by letter. Her painfully slow typing seems laborious to adults, but she carries on with an absorption that makes it clear that time has lost its meaning for her.” – Sherry Turkle on Robin, aged 4, programming a computer.

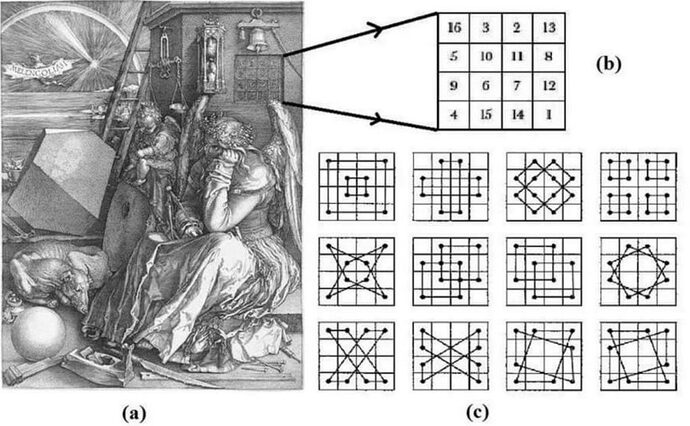

Albrecht Dürer’s magic square in his engraving Melencolia I (1514) is a symmetrical square with 86 sum combinations of the number 34.

| 16 | 3 | 2 | 13 |

| 5 | 10 | 11 | 8 |

| 9 | 6 | 7 | 12 |

| 4 | 15 | 14 | 1 |

Write a short program to draw all the shapes.

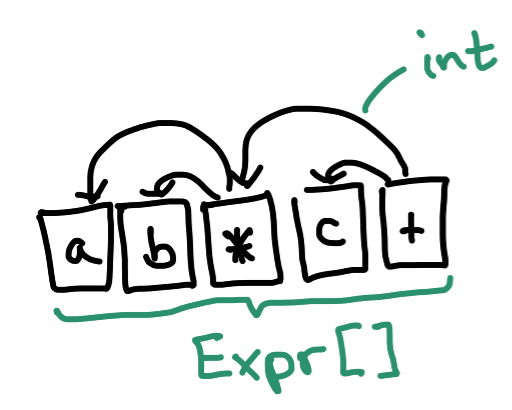

An insight into linear logic, in an article I was reading about compilers. It discussed a technique of flattening the abstract syntax tree and other data structures.

- Flattening ASTs (and Other Compiler Data Structures) - Adrian Sampson, Department of Computer Science at Cornell University

Why?

- Performance

- Locality. ..Flattened

Exprs are packed together in a contiguous region of memory, which is good for spatial locality. Your data caches will work better.- Cheap allocation. In flatland, there is no need for a call to

mallocevery time you create a new AST node. Instead, provided you pre-allocate enough memory to hold everything, allocation can entail just bumping the tail pointer to make room for one moreExpr.- Cheap deallocation.. Smaller references..

- Ergonomics

- Easier lifetimes.. Convenient deduplication

Exploiting the Flat Representation

What could we do if we exploited stuff about the flattened representation that isn’t true about normal AST style?

..Visually, you can imagine that reference arrows always go backward in the array, and data always flows forward.

Let’s write a new interpreter that exploits this invariant. Instead of starting at the root of the tree and recursively evaluating each child, we can start at the beginning of the

ExprPooland scan from left to right. This iteration is guaranteed to visit parents after children, so we can be sure that the results for subexpressions will be ready when we need them.

The tree flattened into a stream is essentially a stack-based concatenative style program in postfix notation.

This “extra-flat” interpreter has two potential performance advantages over the recursive interpreter: there’s no stack bookkeeping for the recursive calls, and the linear traversal of the

ExprPoolcould be good for locality.My favorite observation about this technique, due to a Reddit comment by Bob Nystrom, is that it essentially reinvents the idea of a bytecode interpreter. It’s pretty nifty that “merely” changing our AST data structure led us directly from the land of tree walking to the land of bytecode.

“I can imagine, while parsing, whenever you created a new node, rather than allocating space just for it and giving the parent node the pointer, you could push the node onto a vector or some other such growable contiguous array and give the parent a reference to that spot.”

Yes, that will definitely help! Once you do that, you may consider other stepwise refinements.

- Since subexpressions are executed first, it makes sense to have them earlier in the array than their parents. So instead of walking from the root to the leaves, walk the leaves first and then have the parents follow them.

- At that point, parents no longer need references to their children. Instead, you just need some convenient place to store the results of the child evaluations. Maybe a stack.

- Now your array of instructions doesn’t need any actual links between them. It’s just a flat list of which operations to perform.

Ta-da, you just reinvented stack-based bytecode.

- Mike Pall attributes some of LuaJIT’s performance to its “linear, pointer-free IR.” It’s pointer-free because it’s flattened.

- The Oil shell project has a big collection of links all about “compact AST representation,” much of which boils down to flattening.

Flattening-like ideas appear a lot in data-oriented design. Beyond just language implementation, similar concepts show up in other performance-oriented domains.

The pursuit led me to:

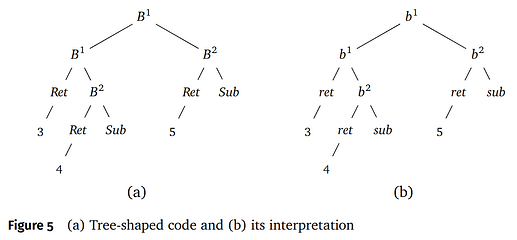

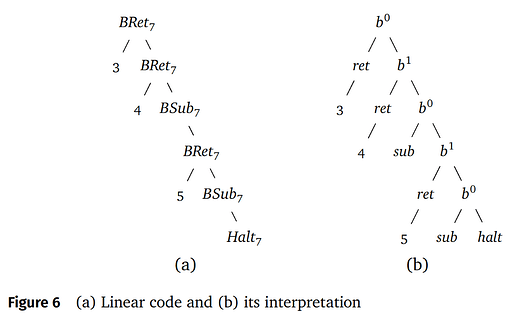

Interpreters and Compilers: Tree-Shaped Code and Linear Code.

Associativity of generalized composition justifies rotation of the tree-shaped code in figure 5 into linear form, as shown in figure 6.

Then we can unroll the definition of eval′5 on expr, as in figure 5(b).. The end result is shown in figure 6(b); note in particular that the generalized compositions now all nest to the right.

There is a well-known compilation scheme from expressions to a stack machine. The observation that associativity of generalized composition leads directly to linearly structured target code is due to Wand (Continuation-based program transformation strategies), and explored in detail for more sophisticated languages by Henson (Elements of Functional Languages).

There’s even a shout-out to Smullyan’s bluebird, blackbird, and bunting combinators from To Mock a Mockingbird, and Other Logic Puzzles.

..Going into a forest and discussing the unusual “birds” (combinators) they find there.

Bird-watching was a hobby of one of the founders of combinatory logic, Haskell Curry, while the name of another founder, Moses Schönfinkel, means beautiful spark, or possibly beautiful little finch.

Each species of bird in Smullyan’s forest stands for a particular kind of combinator appearing in the conventional treatment of combinatory logic. Each bird has a distinctive call, which it emits when it hears the call of another bird. Hence, an initial call gives rise to a cascading sequence of calls by a succession of birds.

In a seminal paper (Definitional interpreters for higher-order programming language), Reynolds showed how to use continuation-passing style (CPS) and defunctionalization to take a recursive interpreter for a language and transform it into an abstract machine for executing programs in that language.

We revisit some well-known applications (factorial, fast reverse, tree flattening, and a compiler

for a simple expression language) to spotlight their dependence on the algebraic property of associativity.

Continuation-passing style transformation—which after all is a matter of sequentializing code that would otherwise be tree-structured—is connected to associativity.

Linear logic and concatenative languages are closer to writing a stream of bytecode instructions. Even tree-like languages like LISP, or syntax trees of any language, are typically compiled into a flat structure before running on a real machine or an interpreter, essentially an abstract machine.